COLOR RECOGNITION UNDER ILLUMINATION SHIFT: A CNN STUDY WITH LOIO EVALUATION AND RELIABILITY ANALYSIS ON SADACOLORDATASET (SCD)

DOI:

https://doi.org/10.71146/kjmr898Keywords:

Color Recognition, illumination shift, domain generalization, calibration, reliability diagrams, MobileNet, SadaColorDataset SCD, LOIO EvaluationAbstract

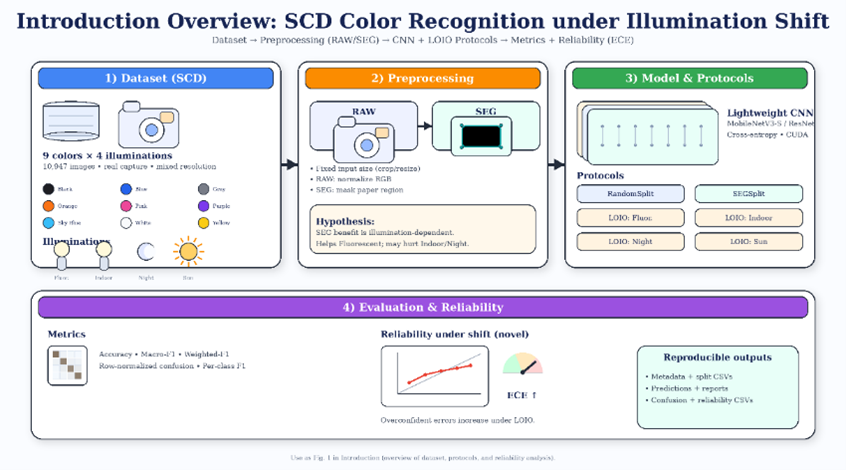

Color recognition looks easy on paper, yet it often breaks in practice because illumination spectra, autowhite balance, exposure, and in-camera processing shift the observed color distributions. To study this failure mode in a controlled but realistic setting, we use SadaColorDataset (SCD), a color-paper dataset with 9 classes (Black, Blue, Gray, Orange, Pink, Purple, Sky Blue, White, Yellow) captured under four illuminations (Fluorescent_Light, Indoor, Indoor_Night, Sunlight), totaling 10,843 images. We benchmark lightweight CNNs (MobileNet/ResNet family) with two input variants: RAW images and a simple SEG pipeline intended to suppress background influence. Evaluation is reported under standard RandomSplit/SEGSplit as well as a strict leave-one-illumination-out (LOIO) protocol where the test domain is an unseen lighting condition. While in-domain splits are near-saturated, LOIO exposes a clear robustness gap: performance drops substantially when illumination changes, and segmentation is not uniformly beneficial SEG improves Fluorescent LOIO but degrades Indoor and Indoor_Night LOIO, revealing an illumination-dependent tradeoff. We further analyze prediction confidence and show that calibration worsens under shift; reliability diagrams and ECE indicate frequent overconfident errors in LOIO settings. Beyond reporting accuracy, we provide a reproducible pipeline, split protocol, and error analyses that clarify which color pairs fail under specific lighting. On LOIO, we obtain 0.903/0.893 (Acc/Macro-F1) for Fluorescent with SEG, 0.947/0.912 for Indoor with RAW, and 0.888/0.879 for Indoor_Night with RAW.

Downloads

References

[1] G. Buchsbaum, “A spatial processor model for object colour perception,” Journal of Franklin Institute., vol. 310, no. 1, pp. 126, 1980. https://doi.org/10.1016/0016-0032(80)90058-7

[2] E. H. Land and J. J. McCann, “Lightness and Retinex theory” Journal of the Optical Society of America, 1971. https://doi.org/10.1364/JOSA.61.000001

[3] G. D. Finlayson and E. Trezzi, “Shades of Gray and colour constancy,” in Proc. Color Imaging Conf., 2004.

[4] J. van de Weijer, T. Gevers, and A. Gijsenij, “Edge-based color constancy,” IEEE Transactions on Image Processsing, 2007. https://doi.org/10.1109/TIP.2007.901808

[5] Y. Hu, B. Wang, and S. Lin, “FC4: Fully convolutional color constancy with confidence-weighted pooling,” in Proc. CVPR, 2017. https://openaccess.thecvf.com/content_cvpr_2017/papers/Hu_FC4_Fully_Convolutional_CVPR_2017_paper.pdf

[6] G. Hemrit, M. F. Pedersen, J. A. Larsen, and J. Y. Hardeberg, “Rehabilitating the ColorChecker dataset for illuminant estimation,” 2018

[7] C. Aytekin et al., “INTEL-TUT dataset for camera invariant color constancy,” 2017. https://arxiv.org/pdf/1906.01340

[8] F. Laakom et al., “A color constancy dataset: INTEL-TAU,” 2019. https://doi.org/10.1109/ACCESS.2021.3064382

[9] K. He, X. Zhang, S. Ren, and J. Sun, “Deep residual learning for image recognition,” in Proc. CVPR, 2016. https://doi.org/10.1109/CVPR.2016.90

[10] A. Howard et al., “Searching for MobileNetV3,” in Proc. ICCV, 2019. https://doi.org/10.1109/ICCV.2019.00140

[11] M. Tan and Q. Le, “EfficientNet: Rethinking model scaling for convolutional neural networks,” in Proc. ICML, 2019. https://proceedings.mlr.press/v97/tan19a/tan19a.pdf

[12] I. Gulrajani and D. Lopez-Paz, “In search of lost domain generalization,” in Proc. ICLR, 2021. https://openreview.net/pdf?id=lQdXeXDoWtI

[13] H. Yu et al., “Rethinking the evaluation protocol of domain generalization,” in Proc. CVPR, 2024. https://doi.org/10.1371/journal.pone.0320300

[14] C. Guo, G. Pleiss, Y. Sun, and K. Q. Weinberger, “On calibration of modern neural networks,” in Proc. ICML, 2017. https://proceedings.mlr.press/v70/guo17a/guo17a.pdf

[15] M. P. Naeini, G. F. Cooper, and M. Hauskrecht, “Obtaining well calibrated probabilities using Bayesian binning,” in Proc. AAAI, 2015. https://doi.org/10.1609/aaai.v29i1.9602

[16] Y. Ovadia et al., “Can you trust your model’s uncertainty? Evaluating predictive uncertainty under dataset shift,” in Proc. NeurIPS, 2019. https://proceedings.neurips.cc/paper_files/paper/2019/file/8558cb408c1d76621371888657d2eb1d-Paper.pdf

[17] B. Lakshminarayanan, A. Pritzel, and C. Blundell, “Simple and scalable predictive uncertainty estimation using deep ensembles,” in Proc. NeurIPS, 2017. https://proceedings.neurips.cc/paper_files/paper/2017/file/9ef2ed4b7fd2c810847ffa5fa85bce38-Paper.pdf

[18] D. Hendrycks and K. Gimpel, “A baseline for detecting misclassified and out-of-distribution examples,” 2017. https://doi.org/10.48550/arXiv.1610.02136

[19] H. Zhang, M. Cisse, Y. N. Dauphin, and D. Lopez-Paz, “mixup: Beyond empirical risk minimization,” in Proc. ICLR, 2018. https://openreview.net/pdf?id=r1Ddp1-Rb

[20] A. Thulasidasan et al., “On mixup training: Improved calibration and predictive uncertainty,” in Proc. NeurIPS, 2019.

https://doi.org/10.48550/arXiv.1905.11001

[21] A. Gijsenij, T. Gevers, and J. van de Weijer, “Computational color constancy: Survey and experiments,” IEEE Trans. Image Process., 2011. https://ivi.fnwi.uva.nl/isis/publications/2011/GijsenijTIP2011/GijsenijTIP2011.pdf

[22] J. van de Weijer et al., “Learning color names from real-world images,” 2009. https://lear.inrialpes.fr/people/vandeweijer/color_names.html

[23] J. P. de Vries et al., “Emergent color categorization in a neural network trained for object recognition,” 2022. https://doi.org/10.7554/eLife.76472

[24] A. Gomez-Villa et al., “Color names in vision-language models,” 2025.

https://doi.org/10.48550/arXiv.2509.22524

[25] N. Banić and S. Lončarić, “Unsupervised learning for color constancy,” 2017.

https://doi.org/10.48550/arXiv.1712.00436

[26] OPERATIONAL ANDROID MALWARE FILTERING: CALIBRATED PROBABILITIES AND DISTRIBUTION-FREE GUARANTEES. (2025). Kashf Journal of Multidisciplinary Research, 2(12), 58-73. https://doi.org/10.71146/kjmr778

[27] B. Raza, S. Bibi, S. Bibi, and A. Nawaz, “SADA COLOR DATASET (SCD): 9 paper colors × 4 illumination conditions for robust color vision evaluation,” Spectrum of Engineering Sciences, vol. 4, no. 2, 2026. doi: 10.5281/zenodo.18844499.

Downloads

Published

Issue

Section

Categories

License

Copyright (c) 2026 Paras Mangi, Sadaf Bibi, Sadia Bibi (Author)

This work is licensed under a Creative Commons Attribution 4.0 International License.