CALM-FEDIDS: ROBUST FEDERATED INTRUSION DETECTION ON HETEROGENEOUS DATA

DOI:

https://doi.org/10.71146/kjmr795Keywords:

Federated Learning, FedProx, Intrusion Detection System, Heterogeneity, SecurityAbstract

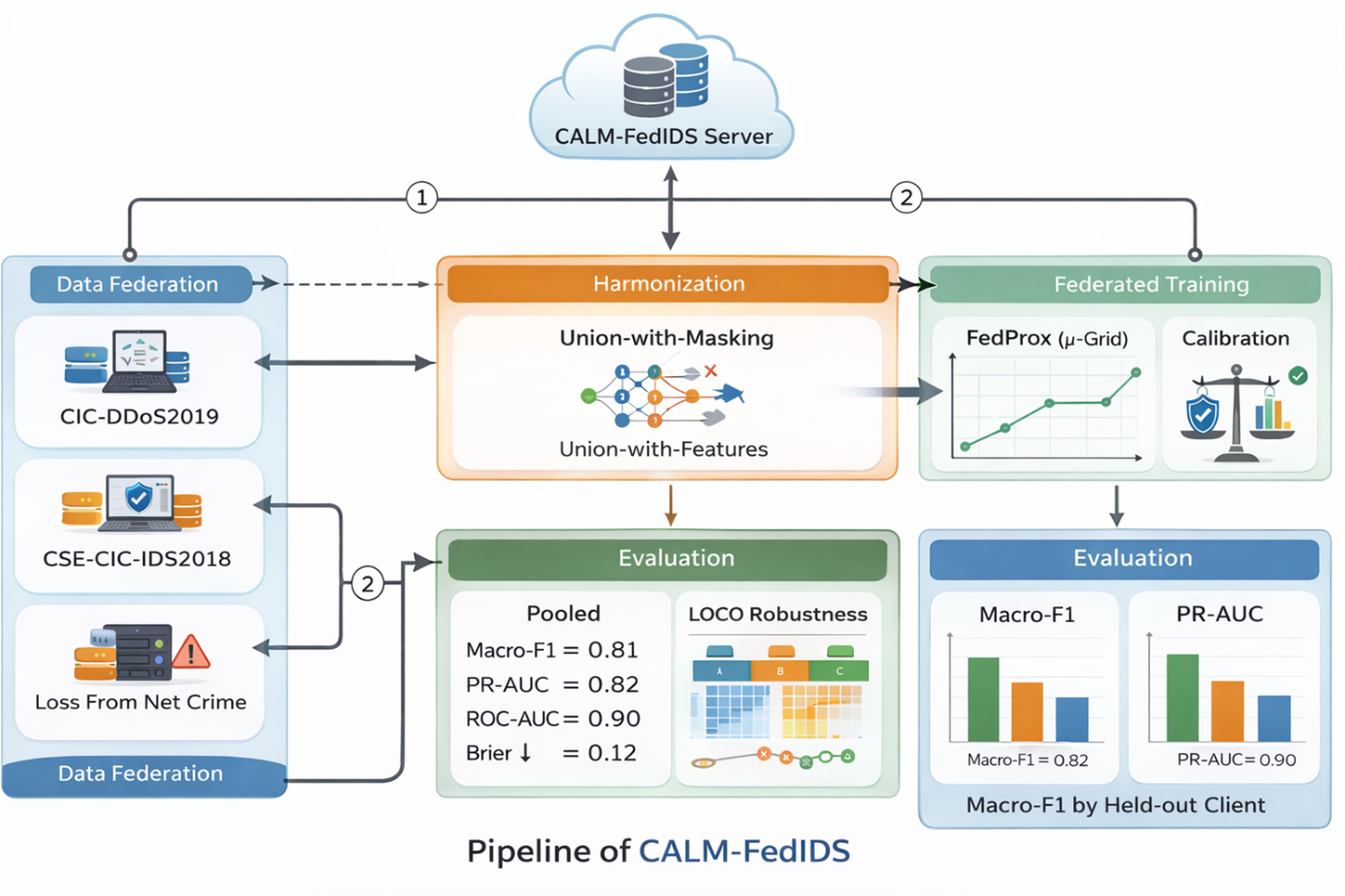

Network attack detectors often fail when data come from different places, follow different schemas, or cannot be centralized for privacy reasons. We study this practical setting using five real datasets (CIC-DDoS2019, CSE-CIC-IDS2018, Friday-WorkingHours-Afternoon-DDoS, Loss-From-Net-Crime, and CyS_Attacks_Dataset) that differ in feature names, class balance, and file formats. To the best of our knowledge, prior IDS work has not rigorously trained and evaluated on truly heterogeneous, multi-source datasets without centralizing raw data. Our goal is a deployable detector that (i) learns without pooling raw data, (ii) remains robust when a site has new or missing features, and (iii) produces calibrated probabilities that translate directly into risk thresholds. We propose CALM-FedIDS—a Calibrated, Alignment-and-Masking federated method built on FedProx. First, we harmonize features by name and create a union-with-masking representation. We train a lightweight logistic model with FedProx (μ-grid search) so sites with non-IID data can still contribute safely. We evaluate client hold-out (LOCO) to mimic deployment at an unseen site, and we report class-imbalance-aware metrics (macro-F1, PR-AUC) and calibration (reliability curves, Brier). We also compare against a centralized oracle and per-client baselines. On pooled evaluation, CALM-FedIDS achieves strong discrimination (ROC-AUC ≈ 0.913, PR-AUC ≈ 0.829) and good probability quality, outperforming a centralized oracle baseline (ROC-AUC ≈ 0.828, PR-AUC ≈ 0.672) while keeping data local. CALM-FedIDS delivers a practical IDS for multi-institution settings: it respects privacy, tolerates schema drift, and outputs calibrated scores that operations teams can threshold. For deployment, (1) keep the union-with-masking schema log across partners, (2) select μ using a small validation exchange of metrics only, (3) monitor calibration and update post-hoc (e.g., isotonic) if traffic shifts, and (4) extend with secure aggregation or differential privacy when policy requires stronger guarantees.

Downloads

References

[1] I. Sharafaldin, A. G. Lashkari, and A. A. Ghorbani, “Toward Generating a New Intrusion Detection Dataset and Intrusion Traffic Characterization,” ICISSP, 2018. (CSE-CIC-IDS2018).

[2] CICIDS2017 (Friday Working Hours) dataset page, Canadian Institute for Cybersecurity, 2017.

[3] CIC-DDoS2019 dataset page, Canadian Institute for Cybersecurity, 2019.

[4] C. Guo, G. Pleiss, Y. Sun, and K. Q. Weinberger, “On Calibration of Modern Neural Networks,” ICML, 2017.

[5] (Survey example) N. Singhal et al., “Federated Learning for Cybersecurity: A Survey,” IEEE Access, 2024 (overview of gaps incl. heterogeneity and evaluation).

[6] H. B. McMahan et al., “Communication-Efficient Learning of Deep Networks from Decentralized Data,” AISTATS, 2017. (FedAvg).

[7] T. Li, A. S. Sahu, A. Talwalkar, and V. Smith, “Federated Optimization in Heterogeneous Networks,” MLSys (arXiv:1812.06127), 2020. (FedProx).

[8] P. W. Koh et al., “WILDS: A Benchmark of In-the-Wild Distribution Shifts,” ICML, 2021.

[9] S. Sagawa et al., “Extending the WILDS Benchmark,” ICLR, 2022.

[10] T. Saito and M. Rehmsmeier, “The Precision-Recall Plot Is More Informative than the ROC Plot when Evaluating Binary Classifiers on Imbalanced Datasets,” PLOS ONE, 2015.

[11] J. Platt, “Probabilistic Outputs for Support Vector Machines and Comparisons to Regularized Likelihood,” Advances in Large Margin Classifiers, 1999; N. Niculescu-Mizil and R. Caruana, KDD 2005 (isotonic/Platt). (background; canonical calibration methods.)

[12] K. Bonawitz et al., “Practical Secure Aggregation for Privacy-Preserving Machine Learning,” ACM CCS, 2017.

[13] H. B. McMahan et al., “Learning Differentially Private Recurrent Language Models,” ICLR, 2018. (DP-FL).

[14] S. Shafiq et al., “Improving Generalization of Network Intrusion Detection Models Across Heterogeneous Data,” IEEE Access, 2024. (Cross-dataset robustness discussion).

[15] Z. C. Lipton, D. Kale, and R. Wetzel, “Modeling Missing Data in Clinical Time Series with RNNs,” MLHC, 2016. (Missingness indicators).

[16] Z. Che et al., “Recurrent Neural Networks for Multivariate Time Series with Missing Values,” Sci. Reports, 2018.

[17] B. Raza, A. Maitlo, Z. H. Shar, and I. Hyder, “Operational Android malware filtering: Calibrated probabilities and distribution-free guarantees,” Kashf Journal of Multidisciplinary Research, vol. 2, no. 12, pp. 58–73, 2025.

[18] B. Raza, S. Rajper, N. A. Shaikh, Z. H. Shar, and I. Hyder, “Parsimonious gesture benchmarking for duplicate-contaminated touchless document interaction,” Spectrum of Engineering Sciences, vol. 4, no. 4, pp. 917–932, 2026, doi: 10.5281/zenodo.19690462.

[19] S. Bibi, F. A. Rajput, M. Younis, S. Bibi, and B. Raza, “Vector+SQL retrieval with selectivity workloads: Measuring tail latency and quality under filtered Top-K,” VFAST Transactions on Software Engineering, vol. 14, no. 1, pp. 335–349, 2026, doi: 10.21015/vtse.v14i1.2353.

Downloads

Published

Issue

Section

Categories

License

Copyright (c) 2025 Wahaj Hassan Soomro, Muhammad Arslan Siddiqui (Author)

This work is licensed under a Creative Commons Attribution 4.0 International License.